Data Structure Algorithms play a pivotal role in computer science and software development. In this article, we will explore the significance of data structure algorithms, their fundamental concepts, and the relationship between data structures and algorithms.

Basic Data Structures:

Arrays:

Arrays are one of the fundamental data structures, consisting of a collection of elements of the same data type stored in contiguous memory locations. They offer quick access to elements based on their index, making them efficient for retrieval but limited in flexibility for insertions and deletions.

Linked Lists:

Linked lists are dynamic data structures that consist of nodes, each containing a value and a reference to the next node. They come in various types, such as singly linked lists, doubly linked lists, and circular linked lists, each with its own advantages and use cases.

Stacks:

Stacks operate on the Last-In-First-Out (LIFO) principle, where the last element added is the first one to be removed. They are useful for tasks like expression evaluation and recursive function calls.

Queues:

Queues work on the First-In-First-Out (FIFO) principle, where the first element added is the first one to be removed. Linear queues and circular queues are two common implementations.

Trees:

Trees are hierarchical data structures with a root node connected to child nodes, forming a branching structure. Binary trees, AVL trees, and Red-Black trees are some essential variants.

Graphs:

Graphs consist of nodes connected by edges and are used to model relationships between objects. They can be directed or undirected, and algorithms based on graphs have a wide range of applications.

Advanced Data Structures:

Heaps:

Heaps are specialized trees that satisfy the heap property, making them efficient for extracting the minimum or maximum element in constant time. Min heaps and max heaps serve different purposes.

Hash Tables:

Hash tables use hash functions to map keys to specific locations, facilitating fast data retrieval. Collision handling techniques like chaining and open addressing are employed to deal with hash collisions.

Trie:

A trie, also known as a prefix tree, is a tree-like data structure used to store a dynamic set of strings efficiently. It excels at string-related operations like searching for a specific prefix.

B-Trees:

B-trees are balanced search trees designed to work efficiently on disks or other storage devices. They are commonly used in database management systems and file systems.

Disjoint Set Data Structure (Union-Find):

The disjoint set data structure maintains a collection of disjoint sets and supports merging and querying sets efficiently. It is essential for solving problems involving connectivity and component grouping.

Algorithm Analysis:

Time Complexity:

Time complexity measures how the runtime of an algorithm grows with the size of the input. Big O, Omega, and Theta notations express upper, lower, and tight bounds on time complexity, respectively.

Space Complexity:

Space complexity gauges the memory used by an algorithm concerning the size of the input. It is crucial for optimizing memory usage in resource-constrained environments.

Asymptotic Analysis:

Asymptotic analysis focuses on understanding the behavior of algorithms as the input size approaches infinity. It helps identify the most significant factors impacting an algorithm’s efficiency.

Best, Worst, and Average Case Analysis:

Algorithms can perform differently based on the characteristics of the input data. Analyzing their behavior in best, worst, and average-case scenarios aids in making informed design choices.

Sorting Algorithms:

Bubble Sort:

Bubble sort repeatedly steps through the list, compares adjacent elements, and swaps them if they are in the wrong order. Though simple, it is not efficient for large datasets.

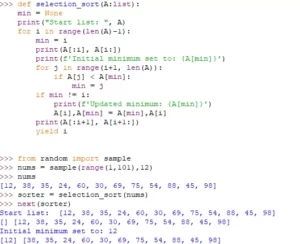

Selection Sort:

Selection sort divides the input into sorted and unsorted regions, repeatedly finding the minimum element from the unsorted region and placing it at the end of the sorted region.

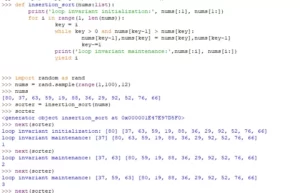

Insertion Sort:

Insertion sort builds the final sorted array one item at a time, comparing each element with the already sorted part and inserting it at the appropriate position.

Merge Sort:

Merge sort employs the divide-and-conquer strategy, breaking the list into smaller sublists, sorting them, and then merging them back together.

Quick Sort:

Quick sort also uses the divide-and-conquer approach, partitioning the list around a pivot element and recursively sorting the two resulting sublists.

Radix Sort:

Radix sort sorts elements by their individual digits or bits, making it suitable for integers and strings.

Searching Algorithms:

Linear Search:

Linear search checks each element in a list sequentially until the target element is found or the list is exhausted.

Binary Search:

Binary search operates on sorted lists, repeatedly dividing the search space in half until the target element is located.

Depth-First Search (DFS):

DFS is a graph traversal algorithm that explores as far as possible along each branch before backtracking.

Breadth-First Search (BFS):

BFS explores all the neighbor nodes at the current depth before moving on to nodes at the next level.

Shortest Path Algorithms:.

Dijkstra’s Algorithm:

Dijkstra’s algorithm finds the shortest paths from a source node to all other nodes in a weighted graph.

Bellman-Ford Algorithm:

The Bellman-Ford algorithm calculates the shortest paths in a weighted graph, even when negative edge weights are present.

Minimum Spanning Tree Algorithms:

Prim’s Algorithm:

Prim’s algorithm finds the minimum spanning tree of a connected and undirected graph.

Kruskal’s Algorithm:

Kruskal’s algorithm finds the minimum spanning tree by incrementally adding edges in ascending order of weights.

Dynamic Programming:

Memoization:

Memoization is a technique to optimize recursive algorithms by storing their results and reusing them for overlapping subproblems.

Tabulation:

Tabulation is an alternative dynamic programming approach, where solutions to subproblems are iteratively filled into a table.

Greedy Algorithms:

Knapsack Problem:

The knapsack problem is a classic optimization problem where items have both a value and weight, and the goal is to maximize the total value while not exceeding a given weight capacity.

Huffman Encoding:

Huffman encoding is a lossless data compression algorithm that creates variable-length codes for characters based on their frequencies.

Divide and Conquer:

Concept of Divide and Conquer:

Divide and conquer breaks a problem into smaller, more manageable subproblems, solving them recursively, and then combining the solutions to obtain the final result.

Examples of Divide and Conquer Algorithms:

Merge sort and quick sort are classic examples of algorithms that employ the divide-and-conquer strategy.

Backtracking:

Concept of Backtracking:

Backtracking is an algorithmic approach where the search space is explored incrementally, and if a solution is not found, the algorithm backtracks to explore alternative paths.

Examples of Backtracking Algorithms:

Sudoku solving and the N-Queens problem are commonly solved using backtracking.

Searching and Sorting in Data Structures:

Binary Search Trees (BST):

BSTs are binary trees where the left child is less than the parent, and the right child is greater. They allow for efficient searching, insertion, and deletion.

Heap Sort:

Heap sort uses a binary heap to efficiently sort elements in an array.

Balanced BSTs (AVL Trees, Red-Black Trees) for Sorting:

Balanced BSTs ensure that the tree height is kept in check, making them well-suited for sorting and searching operations.

Graph Traversal and Applications:

Depth-First Search (DFS):

DFS has several applications, including finding connected components in a graph and performing topological sorting for tasks with dependencies.

Breadth-First Search (BFS):

BFS can be used to find the shortest path between two nodes in an unweighted graph and discover connected components.

Applications of Data Structure Algorithms:

Database Management Systems:

Data structures and algorithms play a crucial role in organizing and managing data in database systems.

Networking Algorithms:

Routing and communication protocols in computer networks heavily rely on data structure algorithms.

Image Processing Algorithms:

Image processing techniques, such as edge detection and segmentation, use data structures to efficiently process pixels.

Artificial Intelligence and Machine Learning Algorithms:

Many AI and ML algorithms leverage data structures to represent and process complex data.

Challenges and Trade-offs in Data Structure Algorithm Design:

Time Complexity vs. Space Complexity:

There is often a trade-off between time and space complexity, and finding the right balance is crucial for optimal algorithm design.

Choosing the Right Data Structure for the Problem:

Selecting the appropriate data structure based on the problem requirements can significantly impact the algorithm’s efficiency.

Handling Large Datasets:

Data structures and algorithms must be designed with scalability in mind to handle large datasets efficiently.